This is part 2 of the blog post:

Part 1: Requirements | Deployed Solution | Installation & Configuration

Part 2: Network configuration | LUN Design | VMware vSphere HA | Failure scenarios

Network configuration

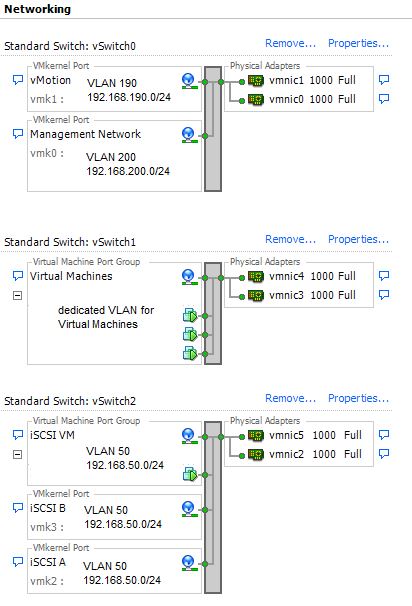

(using six 1 Gbit adapters)

I configured three virtual standard switches:

- vSwitch 0 (pNIC 0 and 1): hosting vMotion and Management Network (each one using a dedicated VLAN)

- vSwitch 1 (pNIC 3 and 4): hosting the virtual machine network

- vSwitch 2 (pNIC 2 and 5): hosting the iSCSI networks

When configuring the network, please note that it is necessary to enable FlowControl for the iSCSI ports.

A note about the division of the physical network cards:

The HP ProLiant ML350 Gen9 server has four on-board NICs. Additionally a 2 port network card was added. To avoid a single-point of failure one port of the onboard NICs and one port of the network card was used for vSwitch 1 and vSwitch 2.

LUN Design:

You should invest some time in your LUN design. In the example described in this blog post I configured only two LUNs:

- LUN 1 is really large, hosting all the virtual machines

- LUN 2 is really small (only 1 GB) to provide a second datastore for HA datastore heartbeat

VMware vSphere HA (High Availability):

VMware vSphere HA is part of the VMware ROBO license. In this use-case this important feature was configured as follows:

- VMware vSphere HA is enabled

- Datastore Heartbeat: HA requires a minimum of two datastores for Datastore Heartbeating. To fulfill this requirement a second, small datastore was configured

- Host Isolation response: Power off

Consideration why using „Power off“ as Host Isolation Response:

Let’s better start with the reasons why I do not want to use the other response possibilities:

Shut down:

In a host isolation scenario, VMs running in the affected site will experience I/O failure as the VSA stops to operate. So a clean shutdown is not possible.

Leave powered on:

In a host isolation scenario, VMs running in the affected site will experience I/O failure as the VSA stops to operate. HA will restart the affected VMs on the host in the surviving site. After failed node comes back online, the same VM is running on both sites and you may experience a split brain scenario in the worst case.

Last but not least my consideration why I decided to use „Power off“:

VMs running in the affected site are powered off and HA will restart them in the surviving site. After the failed node comes back online, all affected volumes resync automatically.

Failure scenarios:

| Scenario | Impact |

|---|---|

| ESXi host failure/complete site failure | Virtual machines running at the failed site/at the failed host fail. VMware HA restarts the virtual machines on the host in the surviving site. |

| HP VSA virtual machine failure | no impact - VMs still have access to the datastores provided by the VSA of the other site. After failed storage node comes back online, volumes resync automatically. |

| inter-site link failure between site A and B | no impact as long as every site can still access the quorum witness |

| Host isolated from other site and Quorum Witness | VMs running on the isolated host perform the action configured in the "Host Isolation Response". VSA of the affected site stops I/O to the datastores. No impact for the surviving site as long as it can access the Quorum Witness. |

| connection lost to Quorum Witness | no impact as long as inter-site link between site A and B is functional |

Pingback: vSphere Design consideration for branch office with 2 sites

Great post!!

One doubt, how is the physical switch configuration in this enviroment?

vmnic 0,1,2 e 5 with VLAN tag configuration, and vmnic 3 and 4 without VLAN configuration?

Cheers

Daniel

vSwitch1 – vmnic 3 & 4 is tagged, too

Sorry, actually I’d like to ask about trunk configuration? all ports are in trunk mode or just vmnic 0,1,2 e 5?