If you use thin provisioned VMFS datastores you will maybe face the problem that unused space on the storage is not released automatically when you delete or migrate a virtual machine.

For example:

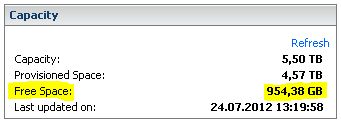

A virtual machine is migrated from one datastore to another. Looking now at the value displayed for “free space” in the vCenter “Datastores and Datastore Clusters” everything looks fine:

But if you take a look at the datastore with eg. your NetApp Virtual Storage Console Plugin you will see that the space was not released from the viewpoint of the storage system:

What is the reason for this?

If you delete a file on a VMFS Datastore, the file system marks the affected blocks as unoccupied and reusable.

Unfortunately this information is not passed on to the storage system which believes that the free blocks are actually still in use.

With vSphere 5 VMWare introduced a new VAAI primitive called Thin provisioning block space reclamation (UNMAP).

This VAAI primitive should ensure that the storage system is informed if something is deleted and that the capacity is automatically released.

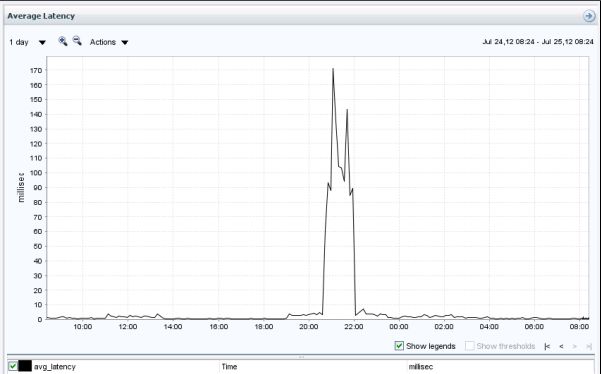

However, performance problems occured as these operations caused high storage IO and high latency.

VMWare has therefore recommended to disable the feature (actually it is disabled by default).

With ESXi Update 1 the command line tool “vmkfstools” was enhanced with an unmap parameter.

So it is now possible to release unused space manually as needed (but make sure that this is also supported from your storage vendor!)

How to:

First you have to clarify if your datastore supports thin provisioning block space reclamation.

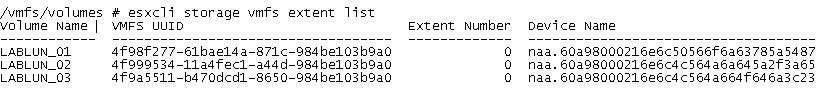

This requires to know the naa ID from the affected LUN. You can do this by using the command:

esxcli storage vmfs extent list

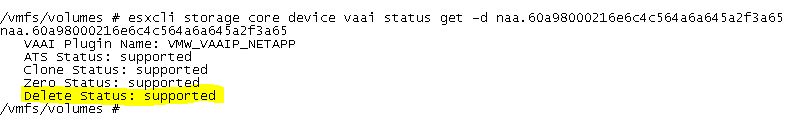

Now you can list all supported VAAI primitives:

esxcli storage core device vaai status get -d naa.xxxxxxxxxxxxxxxxxxxxxxxxxx

Important here is that the “deleted status” is supported.

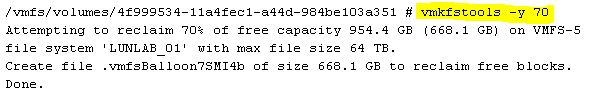

Now you can release a desired percentage of the free memory by using the command “vmkfstools -y“.

If you want to release 70 percent of the free memory extend the command with 70:

vmkfstools -y 70

It should be noted that this process can lead to high latency on the affected LUNs. So this work should better be done during a maintenance window.

Here you can see real life latency caused from the command in the example above:

Thank you for a guide, but my delete status is unsupported. This “how to” is easy to read, good work.

delete status is unsupported as well

what does the status of supported/unsupported depend on and can it be changed?